1. Introduction

After reading Brad McGehee’s guide to SQL Virtual Restore I was really intrigued by the capabilities of the product. So much so I downloaded it and thought I’d give it a whirl. This article is not so much a guide (you can read Redgate’s website or Brad’s blog for that) but more my experiences with the product and the conclusions I came to about its uses.

Please note, I’m not employed by Redgate or endorse any of their products, this is simply providing feedback of my early experiences. I will go through the good and bad times I’ve spent with the product and tried to be as honest as possible in showing my findings. Along with this I’ll provide one or two recommendations which I feel would further enhance the product.

As a side note, this blog was originally written 5 months ago as an article for SQLServerCentral.com so any issues that are still outstanding at the end of this document may now actually have been fixed but I don’t have access to the software anymore to prove or disprove this as i’m now with a different client.

2. Background

We’re currently in the process of moving our SQL environment to SQL 2008 and decided to go crazy and go virtual (VMWare) and on a new SAN Technology (NetApp) all at the same time. With this in mind, we have the facility of snap mirror and flexclones but we cannot fully utilise these technologies until we’ve migrated our live environment which would be an even more crazy idea to throw that in first so we’re moving the non-production environment first. Having a Dev/UAT/Support environment running an expensive SAN is something a lot of DBA’s (including myself) can only dream of but there is a cost implication with this – a massive cost in fact. Our non-production environments are (almost) exact replicas of our live environment so require 1TB of storage for each server and bearing in mind we only have 19TB available for all servers it’ll be utilised very quickly!

The live environment is planned for March 2011 so we’ve got at least 6 months in this situation. This is why I decided to delve into the realms of SQL Virtual Restore (SQLVR from now on) and test to see if it actually can do what it states and if it would work in our environment. If I could get it to work then the cost savings could be enormous.

3. Software (initial)

We currently backup our databases using Redgate’s SQL Backup v5.4 which unfortunately is not supported by SQL Virtual Restore as a known media type so for the purposes of testing I had to download Redgate’s SQL Backup v6.4.0.56 with a requirement to upgrade to this if all went well.

The version of SQLVR we downloaded and installed was v2.0 and with that came Hyperbac 5.0.10.0

4. Testing Environment

The server I used was a virtual server running on 2 vCPU’s and 4GB of RAM and running on a NetApp SAN (12 SAS disks, DP RAID). I won’t bore you too much with the full spec of the ESX hosts, speed or configuration of the SAN. If you want that information I could supply it at a later date.

4.1. Uncompressed DB Sizes

Data – 750GB (626GB not including Archives)

Logs – 150GB (120GB not including Archived)

4.2. Redgate SQL Backup Backupset

173GB compressed .sqb’s (including Archives and compression 1)

140GB compressed .sqb’s (excluding Archives and compression 1)

5. Testing of Virtual vs Physical

I wanted to test space and time savings we’d get out of this product as well as the performance impact of implementing virtual databases.

5.1. Restore Sizes

The initial mount of the vmdf’s and vldf’s came to a total size of < 1GB which gave the total environment size of 173GB for the backups + 1GB for the virtual files = 174GB. This saving a whopping 726GB disk space saving from the outset.

(750GB + 126GB) – 174GB = 726GB

5.2. Restore Timings

To fully restore all the required databases in their uncompressed state it took 3hrs 02mins.

To fully restore (mount) the .sqb backups took 1hr 19mins which is a saving of 1hr 43mins which is a 43% saving on restore time alone.

Fully Restored Size = 626GB / 48GB (log) (not including bankingarchive) and took 3hrs 2m

Fully Mounted Size = 1.37GB + 173GB (Backupset) = 174.37GB (including bankingarchive)

5.3. Large overnight process

I decided to test one of our long running overnight processes to test the capabilities of the virtual files and stress it to see how it copes under heavy process load. This would also provide me with potential file size increases as well as the performance of performing such a process.

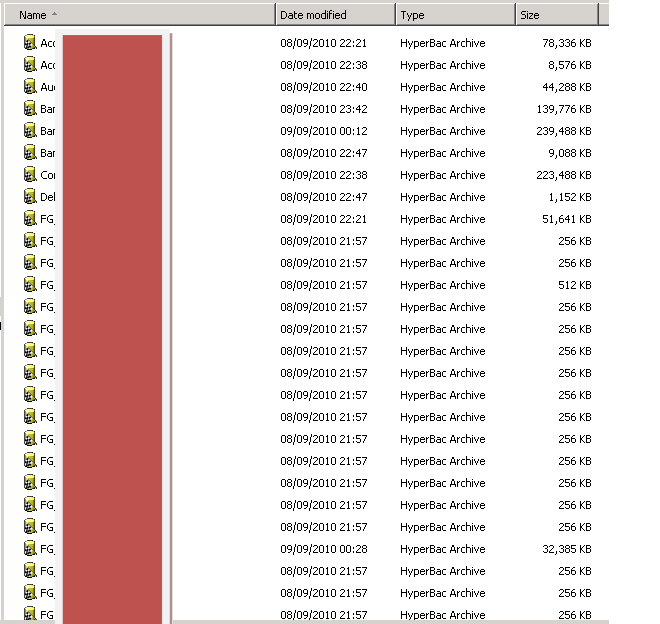

Here are the sizes of the virtual data files before the process was ran are shown below:

The size of the database which would be updated by this process was 139,776kb and after the process was complete there was a 2.5GB increase in file size (1.67GB vmdf and 830MB vldf)

This process would be ran everyday in UAT and would therefore rack up 17.5GB of data space increase over the course of the week. This is not including any of the other testing / processes that would be running during that time. 17.5GB is not a great deal of space in the grand scheme of things and the 726GB saving more than makes up for that increase, even if I were to multiply it by 10 then 175GB increase is still a significant saving.

I decided to then run the same process using physical databases and the timings showed that the process whilst running on physical databases was 30% quicker. It took 50 minutes as opposed to 72 when virtual. I believe this to be purely down to the fact that the virtual datafile sizes can not be pre-allocated and every single change requires the file to expand therefore causing an IO overhead for every change written to the database.

6. Limitations Experienced

6.1. Memory Being Consumed

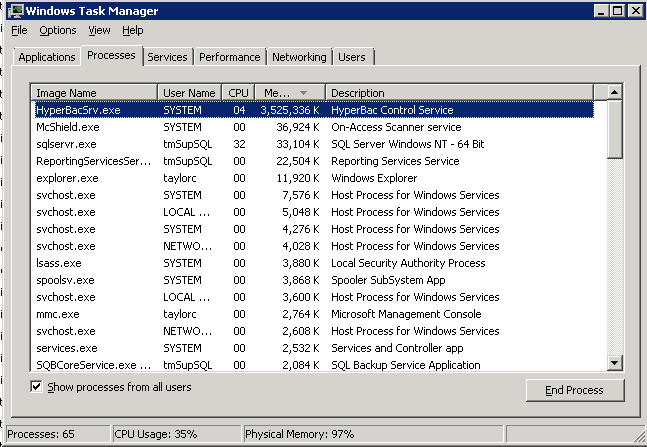

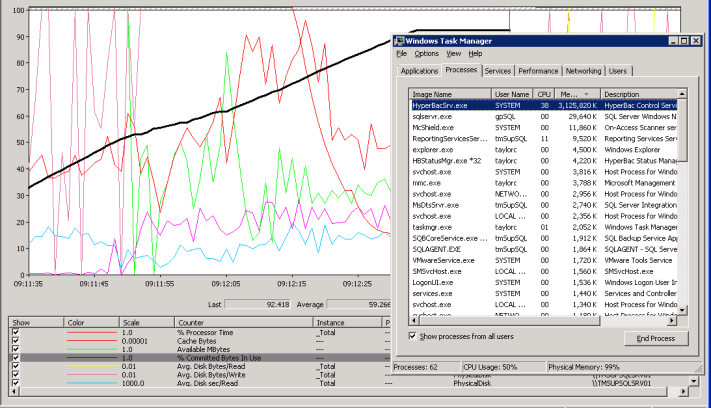

One of the first things I noticed when testing SQLVR was the large amounts of RAM it utilised. In order for me to get the relevant syntax required, I launched the GUI and selected a database backup file to mount and this selecting of the database backup file caused the HyperbacSrv.exe to consume all available RAM resources on the server…..but worst of all, wouldn’t release it after closing the GUI.

Black line being the % Commited Bytes in Use:

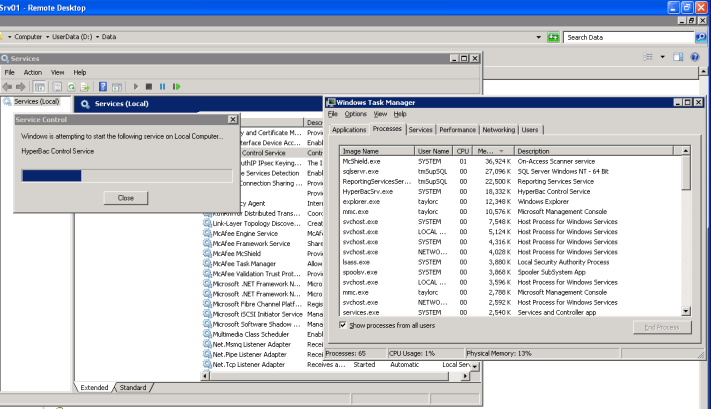

Almost 4GB of ram gone in about 20seconds!!! The only way I could release the memory was to restart the HyperbacSrv.exe service as shown below:

What is didn’t realise was that if you restart the HyperBacSrv.exe then YOU MUST restart the sql service. If you don’t then the databases will not reconnect. Depending on the size of the databases, they can take several (over 10mins in my case) minutes to recover and be Online.

NOTE: This bug has now been fixed by Redgate in their latest version

6.2. Fix for Memory issue

After liaising with Jeff Aven about this we found that it was due to an issue with the process doing a RESTORE HeaderOnly on the backup file as opposed to a RESTORE FilelistOnly. I was supplied with a couple of patched versions (Hyperbac v5.0.24.1 then Hyperbac v5.0.24.3) which had addressed this and rectified the issue.

NOTE: This bug has now been fixed by Redgate in their latest version

6.3. Large virtual files if multiple restore threads ran

I ran a test to see if multiple restores could be ran simultaneously which worked with the obvious IO overhead and something a bit strange which I didn’t realise at first – the virtual files are huge! Some around 9GB!!!

I believe this to be a memory resource issue as i’ve also tested restoring a single DB on a server with only 2GB of RAM and found that it produced a vmdf file of 13GB!!! I’m currently liaising with Redgate again to investigate this issue.

7. Other intermittent issues

7.1. Incorrectly formed backup files

Msg 3241, Level 16, State 0, Line 1

The media family on device ‘d:\Backup\DB.sqb’ is incorrectly formed. SQL Server cannot process this media family.

Msg 3013, Level 16, State 1, Line 1

RESTORE DATABASE is terminating abnormally.

RESTORE DATABASE DB FROM DISK = ‘d:\Backup\DB.sqb’ WITH RECOVERY, STATS, REPLACE;

Msg 3241, Level 16, State 0, Line 1

The media family on device ‘d:\Backup\ DB.sqb’ is incorrectly formed. SQL Server cannot process this media family.

Msg 3013, Level 16, State 1, Line 1

RESTORE DATABASE is terminating abnormally.

This happened every now and again and I couldn’t pinpoint why. Quite frustrating when trying to build a dynamic restore of all files with their relevant filegroups/files.

If you keep running the script eventually you’ll get this:

Msg 10054, Level 20, State 0, Line 0

A transport-level error has occurred when sending the request to the server. (provider: TCP Provider, error: 0 – An existing connection was forcibly closed by the remote host.)

Run the script again and then it’ll work!!!

NOTE: This bug has now been fixed by Redgate in their latest version

7.2. Replace command doesn’t actually seem to replace

I found that when restoring the database using Replace, it didn’t reset the virtual file sizes, it seems to append to the existing vmdf and vldf files as opposed to actually replacing them.

NOTE: This bug has now been fixed by Redgate in their latest version

8. 500k row insert performance test

I decided to run some quick performance tests based on Glenn Berry’s 1 million row insert test (http://www.sqlservercentral.com/blogs/glennberry/archive/2010/02/07/some-initial-insert-test-benchmarks-on-sql-server-2008-r2.aspx). I shortened this to 500k and compared it to a physical database on the same server and truncated the BigTable before each run.

8.1. Looping insert (exec spAddDataToBigTable 500000, 0)

Database Type StartTime EndTime ElapsedTime No.OfRows RowsPerSec

Physical Sep 10 2010 3:14PM Sep 10 2010 3:15PM 58 500000 8620

Virtual Sep 10 2010 1:45PM Sep 10 2010 2:04PM 1083 500000 461

8.2. Bulk insert (exec spAddDataToBigTable 500000, 1)

Database Type StartTime EndTime ElapsedTime No.OfRows RowsPerSec

Physical Sep 10 2010 3:16PM Sep 10 2010 3:17PM 79 500000 6329

Virtual Sep 10 2010 1:29PM Sep 10 2010 1:31PM 109 500000 4587

From these results you can see the impact that having to autogrow the virtual files for every single record has on the timings. The batched insert does still show a performance impact but this is not as significant as the previous test.

9. Conclusion

Although during the short time i’ve spent with SQLVR i’ve had a number of issues with memory, file sizings and actually getting a file to restore i can honestly say extremely impressed with this product. Not only are we saving on average £3000 per virtual SQL Server we create due to the reduction of SAN storage utilised but i can also get a Dev/UAT environment up and running significantly quicker than before. Couple this with all the benefits of using it as a quick and easy way to check your databases for corruption or even testing a procedure / index change without impacting other environments I’d recommend this product to anyone.

So, to answer the question is SQLVR truly a friend or in fact indeed a foe, from personal experience I’d like to think of it as a friend. With a few bumps n scrapes along the way, It has certainly helped me out in my time of need.

One thing I’d like to add to this, in no way shape or form am i suggesting everyone utilizes this product in the same way we have, for some the performance impact could be too great to implement in a UAT or even Dev environment but I’ve thrown into the mix the possibility that it can actually be done and the benefits you can get out of doing so.

10. Recommendations

I think the biggest improvement I’d like to see in later releases is the ability to pre-allocate virtual file sizes. I’m not sure if this is actually possible but I believe that if it is then the one major drawback to this product (autogrow / IO performance) could be alleviated somewhat. This could also help in the fact that at present every time I restore a database using SQLVR I cannot guarantee how much space it will utilize as every time I have done so far they’ve been different, in some case massively different!

I’d like to see the GUI updated to include a simple idea to allow data / logs to be put on a different drive. I’m not sure how much performance impact (never tested it) there is by having the vmdf and vldf’s on the same drive. You can get around this by scripting the restore.

11. Thank you’s

I’d like to give a big shout out to Jeff Aven and the support/development teams for working so swiftly in getting any issues that I uncovered rectified as soon as possible. Jeff is a man who doesn’t seem to sleep and was always on hand anytime of the day or night to have any issues addressed asap.

Hello Chris,

I followed the link over from my article on Simple-Talk. This is excellent stuff. You’ve gone further with the testing than I have to date. Well done. It looks like most of the bugs you hit have been fixed by releases, so that’s great to hear. I think the concept of being able to determine an allocation size for the virtual files is a great idea. I’ll certainly pass it on (although I can tell you that you’re already being read by the dev team). Setting separate locations through the GUI might be a good idea too. Although, I tend to default to using TSQL, so there I get immediate control to do exactly what you’re suggestiong.

Thanks for posting this. I really appreciate you validating the approach I was advocating.

0 Pingbacks